Tell us you’re familiar with this scenario without telling us you’re familiar with this scenario:

The team ships the model on schedule. Training finishes without errors. Validation metrics pass review. Dashboards show stable performance. Everyone signs off and moves on to the next milestone.

Weeks later, the support tickets start. Predictions vary between similar inputs. Edge cases appear that were never flagged in testing. Confidence scores no longer align with outcomes. Engineers dig into the model, expecting a tuning issue. Nothing obvious is wrong.

The problem is older than the model. It lives in the data the system learned from. This challenge is common across industries.

In 2025, 42% of companies reported abandoning most of their AI initiatives, often after pilots failed to translate into reliable, real-world performance.

Gartner estimates that through 2026, roughly 60% of AI projects will be abandoned because the underlying data is not ready for AI use, rather than because of algorithmic limitations.

These failures rarely originate in the code but stem from inconsistent labels, unclear definitions, and unstable datasets that shaped the model’s understanding long before deployment. The system appears functional until it is asked to operate under real conditions.

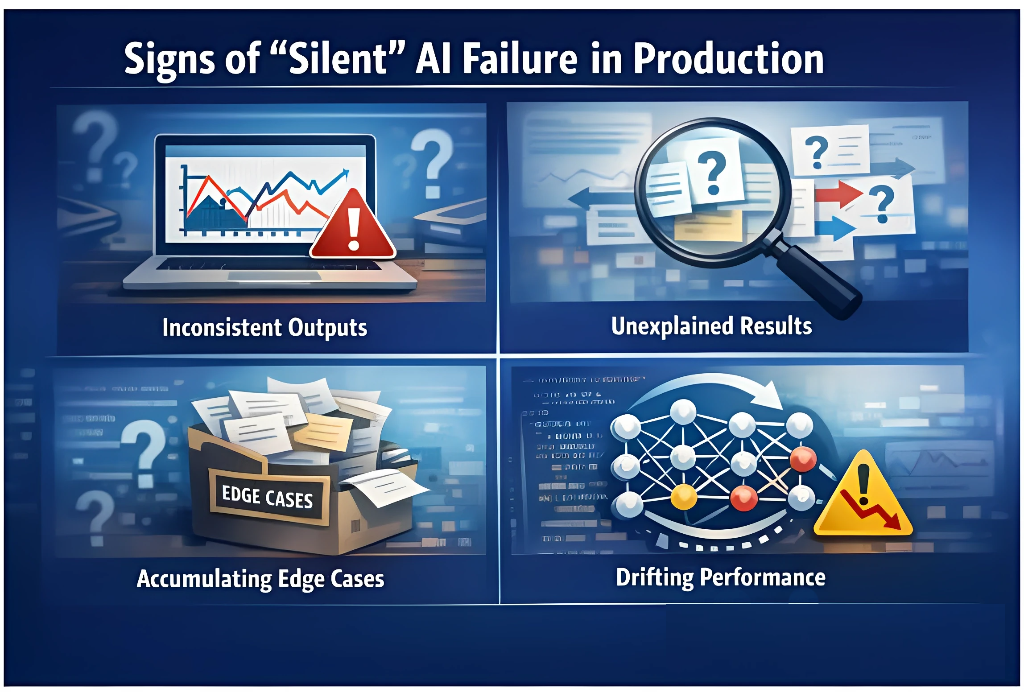

Quick Check: Is Your Team Experiencing Silent AI Failure?

Use this checklist to assess whether these patterns reflect what your team is seeing:

- The model passed validation but behaves inconsistently in production

- Similar inputs produce different outputs without a clear explanation

- Confidence scores no longer align with real-world outcomes

- Edge cases accumulate faster than they can be analyzed

- Retraining improves results temporarily, followed by renewed degradation

- Engineering effort shifts toward explaining behavior rather than improving it

If several of these points apply, the issue is unlikely to be resolved through further optimization alone. When models behave unpredictably despite stable code and infrastructure, the next place to look is the data the system was trained on.

If it is not the model, what does good data actually look like?

What Clean Data Actually Means

Clean data is data that can be interpreted the same way every time it is used. It is not defined by volume or file structure. It is defined by consistency, accuracy, and shared understanding.

In practice, clean data has the following characteristics:

- Consistent interpretation

The same concept is labeled the same way across records, contributors, and time. Variations are intentional and documented, not accidental. - Clear, stable labeling rules

Labeling guidelines are explicit and written down. They do not rely on assumption or individual judgment, and they remain stable as datasets evolve. - Shared context

Contributors understand what each label represents, how edge cases are handled, and where boundaries exist. Meaning is defined before annotation begins.

Consistency matters as much as correctness. When the same concept is labeled differently across records, models learn conflicting signals. When instructions rely on undocumented judgment, interpretation shifts between annotators, introducing uncertainty even when the data appears complete or well structured. Labeling guidelines are part of the data itself because they define how meaning is assigned, how ambiguity is resolved, and how edge cases are handled.

How Dirty Data Breaks Models Before Deployment

Consider a team training a classification model. The dataset is large, labeling is distributed, and validation metrics improve steadily. On the surface, development proceeds without interruption.

Beneath the surface, however, issues enter quietly. Conflicting labels, inconsistent edge case handling, and undocumented assumptions pass into the training set without detection. Because the model cannot distinguish between authoritative labels and accidental ones, it treats these errors as valid signals.

As training progresses, these flaws are baked into the system’s internal structure. By the time performance issues surface in the real world, the model has already “learned” the wrong lessons. What began as a minor data quality oversight has evolved into a deep-seated structural issue.

The Compounding Cost of Poor Data Quality

Data quality issues rarely remain contained to a single training run. Each deployment cycle carries forward assumptions learned earlier, and each iteration builds on what came before. When flawed data enters the system, its impact compounds over time rather than resolving itself.

As teams retrain models to address emerging issues, the same underlying data problems resurface in different forms. Performance drifts, edge cases expand, and iteration slows as teams revisit decisions they believed were already settled. What looks like model instability is often the repeated expression of unresolved data issues.

Over time, the cost becomes visible across multiple dimensions:

Operational impact

- Repeated retraining cycles become routine rather than strategic

- Model behavior grows harder to explain and predict

- Validation efforts increase without delivering lasting improvement

Engineering impact

- Engineering time shifts from development to investigation

- Teams spend more effort diagnosing symptoms than improving systems

- Technical debt accumulates around workarounds and patches

Delivery and business impact

- Launch timelines slip as confidence in system reliability erodes

- Issues spread across environments and products

- Long-term return on AI investment declines

These costs do not reset between releases. They accumulate across teams, models, and deployment cycles, increasing operational friction as AI systems scale.

Data Quality Requires Ongoing Systems and Ownership

Data quality problems persist because they develop over time. Training data is shaped by workflows, contributors, tools, and incentives that extend well beyond a single model or release cycle.

As AI systems evolve, datasets change with them.

- New inputs introduce new edge cases.

- Labeling rules drift as teams scale or rotate.

- Decisions made early continue to influence outcomes long after training completes.

Without clear ownership and repeatable processes, inconsistency re-enters the system with each iteration.

Reliable AI performance depends on how data is produced, reviewed, and maintained across its lifecycle. Consistency must be enforced across contributors and preserved as data volumes grow. Quality has to be monitored continuously, not assumed once a dataset passes validation.

Teams that establish durable data processes are able to iterate and scale with confidence. Teams that do not often find themselves revisiting the same issues repeatedly, at greater cost each time.

If your AI projects are struggling to deliver consistent results, the place to start may not be the model. It may be the data it learned from.

RF-Tech works with teams facing these challenges in production environments. From data annotation and validation to governance and ongoing data management, we help you build AI systems on foundations that hold up under real-world conditions.

Talk to RF-Tech about your data foundation

Schedule a working session to review your training data,

labeling standards, and quality controls before issues surface in production.